It’s been almost three months since the last instalment in this series, so it’s high time for another update on our progress. In this update, as promised I will share some information about our music font, Bravura, and the project we have been leading to standardise the layout of music fonts, and some insights into how we are going to build the user interface for the new application.

Bravura

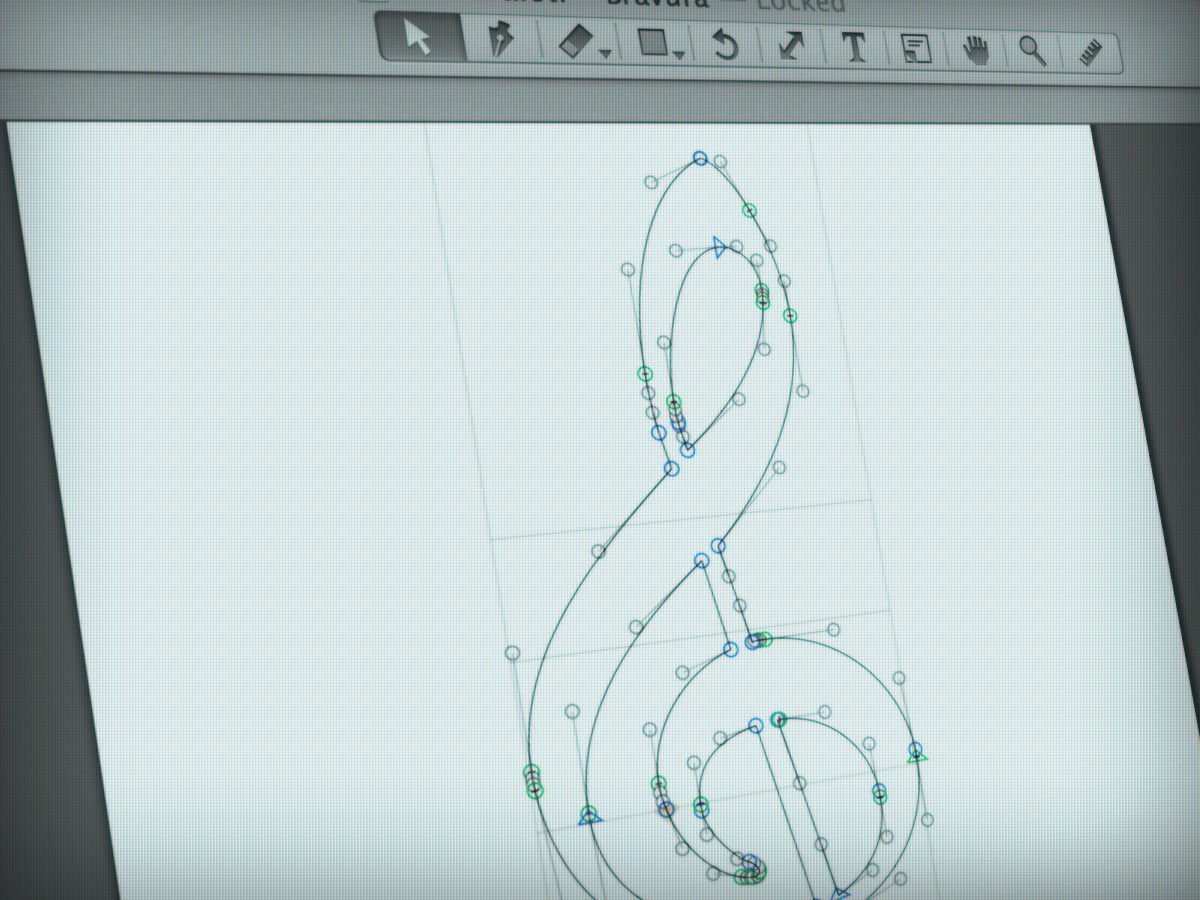

We released the first pre-release version of our music font, Bravura, in May of last year. One year later, we are on the cusp of releasing version 1.0 of the font. That first release, version 0.1, contained around 800 unique glyphs. The current version, version 0.99, contains nearly 2400 unique glyphs (plus almost 400 stylistic alternates and ligated forms for some of the glyphs). Hundreds of hours of work have gone into the font, and overall I am really pleased with both the wide coverage of different kinds of music notation – from early chant right up to pictograms for including electronic elements in performance – and the consistency of its visual appearance, which has retained the blackness and boldness that I set out to capture at the start of the project.

Because we have released it under the very permissive SIL Open Font License, it is free for anybody to use, modify, redistribute or otherwise muck about with. And it has been a thrill to see it being used in lots of different ways, even before its primary intended home, our new scoring application, is ready to go.

First of all, Nicolas Froment, one of the main developers for MuseScore and known online as @lasconic, has used Bravura in a game based on the popular 2048 mechanic, called Breve.

You can play Breve directly in your web browser. The aim of the game is to combine two notes of the same note value to make longer notes using the arrow keys, and to combine all the way up to a breve (double whole note) before you run out of space on the board. I’ve managed to get up to a minim (half note). Can you do better? (Incidentally, if you enjoy Breve, check out Threes, the game on which 2048 and Breve are based, on iOS and Android. It’s a much more subtle and engaging game than 2048.)

Bravura is also being used in the in-development MuseScore 2.0, and it’s also the main music font used in Rising Software’s Auralia and Musition iOS apps, which help students to develop their ear training and music theory skills.

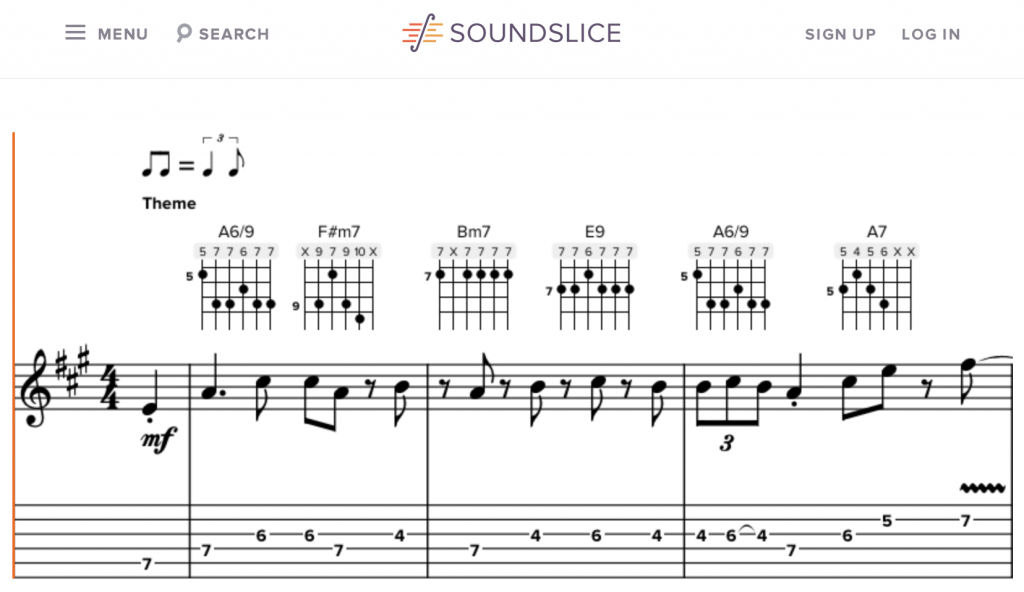

Another great use of Bravura is in Soundslice, a browser-based application that makes it easy to produce detailed, multi-track transcriptions of musical performances from YouTube, and display the results both as tablature and as a score.

Bravura has also already been put to use in LilyPond, and a project is underway to make it easy to use its glyphs in TeX documents via LuaTeX.

Development of Bravura has gone hand in hand with development of the Standard Music Font Layout (SMuFL, pronounced “smoofle”), which is an effort to define a set of guidelines for how music fonts should be constructed, both in terms of which musical symbols should be assigned to which Unicode code points (for example, “A” has the code point U+0041 in all Unicode-compliant fonts), and how glyphs should be scaled relative to each other and positioned relative to the origin point. This is all very technical, but the upshot of the project is that font designers and music software developers have the option of using this standard for their fonts and applications, which should in time make it easier for users to use the same fonts across different applications, and make more compatible fonts overall available.

You might be surprised at just how many different musical symbols are used in conventional music notation (or CMN, a term coined by Donald Byrd to refer broadly to Western music notation developed over the past three or four centuries). You can now browse through the repertoire of glyphs on the SMuFL web site.

Exercising the musical brain

If you’ve been following this diary, by now you will be familiar with the high-level concept of how our application’s musical brain is constructed: from a large number of small engines, each designed to perform a specific task, chained together in series and in parallel, for maximum efficiency in multi-CPU computers. The main thrust of the development work over the past few months has been to connect together all of the engines that have been built to date, so that we can establish the basic editing loop of the program.

This means, at a high level, taking the basic form in which the music is represented (time-based streams of notes, each of a duration stored as a number of beats, rather than as a specific notated duration), and running the music through each of the discrete engines (some in parallel, some in sequence) to build up a display of music that shows the notes with notated durations appropriate to their rhythmic length, prevailing time signature and position in the bar; positioned in the correct place on the staff according to clef, transposition and octave lines; with accidentals appropriate to the prevailing key signature stacked correctly against chords with multiple noteheads; stems pointing in the correct direction and of an appropriate length; rhythm (augmentation) dots shown where appropriate and positioned correctly; and with rests of the appropriate durations (again, divided relative to their position in the bar and the prevailing time signature) shown at the correct vertical position so as not to collide with the notes… and all of this drawn using appropriate spacing, based on the complex relationship between rhythmic duration and horizontal space aggregated from the rhythms of all of the streams of notes that make up the music.

Once the music data has flowed upwards from its most basic representation all the way to its correctly notated appearance, the user must then be able to interact with the drawn music. The simplest way to close the loop and allow the user to make a change that the application has to process is to allow the user to select a note and delete it (leaving a rest in its place, at least for now), change its pitch, change its duration, or shift it forwards or backwards by a set rhythmic amount.

The edit is then made at the most basic level of the representation, i.e. the time-based streams of notes with durations specified in numbers of beats, rather than directly on the displayed notation, and the whole process runs again, transforming the basic representation into fully notated music. This time, the engines need to process only the range of music that has been affected, typically only a bar or two, and perhaps only for one instrument, so the amount of computation needed to update the notation after a typical edit is normally considerably reduced.

Even though the displayed notation is still crude at the moment, now that we have this basic loop in place, we have confidence that the architecture our crack team of programmers have designed is going to deliver the kind of beautiful automatic engraving and powerful editing features that are central to the vision for our product.

Building the application

At this point, our editing loop and the beginnings of our properly engraved music are being exercised in the same test harness we have been using for more than a year. Next, we will start to hook the musical brain up to the real application that you will eventually be able to use.

The musical brain itself is built in a platform-independent way: it runs on Windows and OS X today, but it can be ported to other operating systems if we want to build applications for other platforms in the future.

To build the real application, we have decided to use the cross-platform Qt application framework. Qt has really sophisticated tools for building user interfaces that can run on multiple platforms, while still retaining the native capabilities of the host operating system, for example, accessibility support via MSAA or VoiceOver, or full screen mode on OS X. Qt also has mature and efficient 2D drawing capabilities (essential for producing beautiful display of music notation on the screen, on paper, and in graphics files like PDFs), robust typography support, and all sorts of other goodies.

Most importantly, Qt will allow us to build an application with an attractive, functional user interface that is consistent across both Windows and OS X, and build it only once. If we had to develop the application on Windows and OS X separately, it would take at least twice as long, and you would have to wait even longer to get your hands on it!

More to come

As always, there is so much more we could talk about: the complexity of building an engine that can figure out how to position notes and chords in multiple voices at the same rhythmic position such that they don’t collide and are laid out according to established engraving convention, with the appropriate number of rhythm dots shown in the right places, and with stems drawn in the right place, spanning the correct staves; the subtleties of when an accidental should cause extra rhythmic space to be allocated, and when it should not; how the audio and playback engine will be integrated into our application; and many other things besides.

But having given you a tantalising glimpse into what we’ve been working on recently, I will try to follow the advice of PT Barnum (or was it Walt Disney?), who said, “Always leave them wanting more.” Hopefully your appetite for information about our application has not been completely satisfied, and you will check back again for another update soon.

Daniel, your updates ALWAYS leave us hungry for more!

In the next update, please tell us more about the audio engine and playback.

Bill

If you haven’t yet, you should check out some of what Noteflight has to offer, i find that their interface is more intuitive and user-friendly than both Sibelius and Finale (granted I haven’t used the latest iterations of either). Also something I like from Noteflight is the ability to search for other peoples projects on the site and use other people’s work for inspiration or reference, or the similar ability to collaborate with other users. I would love to see some of these features used in this new application, as the main drawback from Noteflight is theres a 10 score limit for free users, and they only have a subscription service and not a pay-once application.

Very cool. Keep up the good work Daniel.

Very exciting to see this

Looking forward!

All this talk of platform independence is getting my hopes up for a Linux version. Sibelius is the only thing keeping me on Windows as the moment.

@Alan: Please don’t get your hopes up for a Linux version. We are fully focused on Windows and OS X and do not have any plans to support any other desktop operating systems.

I’m not going to hold my breath — but if you make a Linux version, I’ll be first in line to buy a copy.

A reasonable compromise might be to add WINE as a “windows” target for development.

So am I! Qt makes it so easy… and also the ‘musical brain’ probably is written in C++ which is as easy as Qt to port… definitely looking forward, even though I would hate to give up on MuseScore…

+1 on Linux!

To add to that… +1 on Cubase Linux, VST Linux, and ASIO Linux. If Steinberg had OS and Kernel control, all the better. And if it’s easy to port, why not? In a world where 1 line of code works on multiple OS’s, I have a very hard time accepting that people don’t port to Linux. I’m fully aware of corporate bullying, so if that’s the only thing holding it back… then the answer is simple: grow a pair and move on. If it’s something else, then what?

-Sean

What version of QT are you using? I always understood that limitations in the currently available version of QT were at the heart of Sibelius’s inability to be VoiceOver compatible. This is potentially great news!

@Kevin: We will be using the latest and greatest version (currently version 5.3), and keeping up to date with new updates as they come along, as far as is possible. Back in the day, Sibelius was using Qt 4.8, which doesn’t support Voice Over on Mac at all, and which has a less well-developed API on Windows than more recent versions of Qt. From a quick look inside the Sibelius 7.5 application bundle on Mac, it appears that the latest version of Sibelius is still using Qt 4.x. Since Qt was sold to Digia by Nokia, it has been my impression that there is a greater emphasis on things like improving accessibility, as demonstrated by e.g. this blog post.

A Major Mahalo and sending much Aloha!

{‘-‘}

It may be too soon to ask this, but what are your plans regarding extracting parts with this new notation software? I hope it won’t be anywhere near as time consuming as it is with Finale….yikes!

@Jose: You won’t need to extract parts in our application: individual parts will simply be alternative views on the same musical data used in the score, with sufficient flexibility to handle all of the kinds of edits you need to make in parts without requiring extracting them into separate files. And when I say “all the kinds of edits”, I mean it. At least, that’s the plan!

Daniel,

Some friendly feedback… I’ve had some issues lately. Maybe I simply lack the knowledge how. But hopefully it helps. These relate to the transition from Cubase to Score not being as smooth as I want.

1) When I export midi from Cubase to Sibelius I may either loose data in the format or get unwanted data. Every articulation change becomes a lyric, etc. The mass selection / filter option saves my life here. Please include in v1.0 if you can.

2) I can’t copy, then paste into another voice on another track.

3) Upon import, I wish I could say ‘these 2 tracks should be merged, use multiple voices’.

4) Mass editing. PLEASE I beg you, there must be an easier way to copy / paste PATTERNS of slurs, techniques, etc… without pasting notes. Sibelius doesn’t make for editing large scores quickly and it drives me mad. 🙁 (unless I’m missing something)

Again, thanks for the update. I’m excited and yes, due to your evil plan, I am hungry for more. 🙂

-Sean

That is profoundly encouraging! While Sibelius makes preparation of parts much simpler than Finale (especially for works where changes are made up to the last minute), none of the currently available products are smart enough to recognise a staff with two (or more) instruments (Flutes, Oboes, Clarinets, Bassoons, Horns…) and create two (or more) parts from them. If the orchestrator did his job properly, professional copyist has no problem extracting parts properly. Existing rules for orchestration leave no room for ambiguity (for example single line marked a2, or 1. or Solo), so there should be a way to define a reasonably complete algorithm to figure this out, and to flag ambiguous parts. I am anxiously awaiting this feature in the new tool in order to ditch my current weapon of choice!

Hi Daniel,

I hope that you enable some part of technology so that parts *could be* extracted if so desired. For our published editions we often *prefer* for extracted parts as it gives us added flexibility. The linked parts technology in both Finale and Sibelius is frankly sub-standard and we would hate for any new apps (such as yours) that limit our options for total customisation.

For instance, we spend a large amount of time adding cues to parts (we don’t want these displayed in our full score). Dealing with multi-instrument staves is a nightmare. We also want some notational abbreviations in either the score or part but not both. Please make sure that the new app is not *too-smart* which results in a lack of flexibility. Finale, for us, gives us a heap more customisability and flexibility than the “Sibelius-knows-best” paradigm.

Many thanks,

Ross

@Ross: I hope that we will provide sufficiently advanced control over things like repeats and cues such that you don’t need to extract parts in order to get things looking exactly the way you require them, but of course we will allow the extraction of music for individual instruments to separate project files if that is truly what’s required.

One word of caution for you: we are doing our best to make our new application as automatic as possible from an engraving perspective. If you feel negatively towards Sibelius because of its level of automation, you may feel more negatively still towards our application, because we are really trying to make a lot of advanced engraving decisions for you. The way we look at it is that automation is useful if it actually saves you time, i.e. if the program does on its own what you would have done anyway. When you eventually get the chance to try our program (and I hope you will) it will be very worthwhile learning how to change the settings for the automatic engraving, rather than trying to change the appearance of the music one notated element at a time; we are trying to provide a comprehensive set of options that will allow most reasonable alternative appearances with the flipping of one or two switches. If you don’t like the way something looks in our application, use the options to change the engraving settings rather than immediately deciding to start editing individual items in the score.

Thanks for the Soundslice mention! I’m very grateful for all the work you’ve done on Bravura/SMuFL.

Hi Daniel, your blog is inspiring like always and it’s hard for me to wait to get my hands on the new application. Only one question: a few days ago I read a post from apple that QuickTime will not be further develloped and not changed to 64Bit. May that cause Problems with compatibility or performance to future Macs?

With my very best wishes, Fabian

@Fabian: In case there’s any confusion, Qt is not QuickTime; Qt is a cross-platform application framework managed and developed by a company called Digia. As for the future of QuickTime itself, I don’t think you need to worry about this. Apple frequently deprecates older technologies, but they always ensure that there is a suitable modern replacement. There’s no danger that current or future versions of OS X will be unable to play video and audio files in a variety of formats.

Hi Daniel, thanks for explaining!

Many thanks Daniel, another great update and I have spent some time working my way through the SMuFL repertoire of glyphs which is proving to be very useful

Starting to think of a future update 4 or 5 of your present programming endeavors as a finale dream-come true for all kinds of composers and performers, please listen to your users then as now 🙂 Congrats with the side-line success you already have (y) … Having all these wonderful virtual & analog instruments at hand and now this coming score-app at DAW this modern day composer does sure long for the Yamaha Vocaloid Technology too to deliver able authentic voices ready to sing “from Score” …. good work

When you say beats, do you actually mean beats? I’m thinking of a situation where, say, there is a minim in a 2/2 bar tied over to a crotchet in a 2/4 bar. Leaving aside possible tempo changes, the note in question surely has one beat in each bar. Is the note, then, a two-beat note in the basic representation (in which case nudging it to the left would cause it to fill the 2/2 bar, while nudging it to the right would cause it to fill the 2/4 bar)? Or is it a three-crotchet note (in which case the nudge behaviour would be rather different)? Personally I rather hope it’s the former – as long as the user has control over how many beats there are in a bar, independently of the time signature. (This would also yield nice behaviour when changing a time signature from 7/4 to 7/8, say: all the crotchets would become quavers etc., but the number of bars would remain the same.)

Very much enjoying these updates. Thanks for keeping us posted.

@Sandy: When I say “beats”, I’m not being very precise: it’s actually quarter note (crotchet) beats, regardless of time signature. Time signatures, and hence bars, are imposed upon this basic data, rather than notes being stored within bars. In other notation software, a half note (minim) starting on the last quarter of a 2/2 bar would typically be stored as a quarter, a tie, and another quarter, but that’s not how our engine works: in our application, that half note is stored as a single note of two quarters in length, and when all of the processors do their stuff, it ends up being notated as a quarter tied to another quarter either side of a barline. But our application knows it’s still just one note, so if you change the pitch of either quarter you can see, the pitch of both quarters you can see will be changed, because in reality only that single two quarter beat note has been edited. This will also enable us to make sure that things like changing the accidental on a tied note, or adding articulations to a range of notes that include ties, will do the right thing (e.g. not show a staccato on the first and last notes of a tied pair), etc.

Thanks for clarifying that, @Daniel. I’m pleased to hear that the basic representation stores notes as single entities, even when they are rendered in certain contexts using tied noteheads. That makes perfect sense to me (although it probably shouldn’t matter one way or another to the end user, except in that well-designed software is much more likely to work properly!).

Now, given that the software isn’t using a more abstract notion of ‘beat’ in the underlying representation (as I’d hoped it would), will the software nevertheless be able to cope with changes of time signature in the way that I suggested?

To consider another example, suppose I am writing a piece in 2/4 with straight quaver movement in most parts and quaver triplets in some parts, but where the triplets gradually come to dominate the rhythmic character later in the piece. I might decide after the fact that I want to change the time signature mid-way through the piece to 6/8 – so my triplet quavers become quavers and any lingering straight quaver patterns are rendered as dotted quavers. (The abstract beat, or pulse, remains two-in-a-bar throughout.) Will I be able to make that change without too much pain?

@Sandy: We haven’t specified in detail all of the editing operations you’ll be able to accomplish in our application, but converting simple to compound and vice versa should certainly be possible.

I find these technical details and design decisions super interesting, especially as I’m developing with my own toy notation software (with SMuFL!). Thanks a lot for sharing them! It sounds like a nice approach that would make it super easy to have a sequencer view, to implement playback or import midi files. It makes sense that the score view is only one of many, and the only one that really needs to know about dotted notes and that kind of stuff.

The one thing I’m curious about is how you deal with notes tied to triplet notes? For example, a quarter note tied to the first note of a triplet of eights. From your description, that would correspond to a note of 4/3 beats followed by two notes of 1/3 beat. But then, does the layout engine deduce that this should be represented using a triplet? If so, isn’t it possible to have ambiguities as to the kind of tuplet and where it starts/end? Do you have additional metadata for this? I love this stuff 🙂

@Tristan: Notes are always stored in rational durations (i.e. fractions of quarter beats), and they also know what kind of tuplet they belong to. I believe that notes are actually stored inside a tuplet using their non-tuplet durations (i.e. an eighth note in a tuplet of ratio 3:2 for eighth notes is stored as an eighth note, not as a note two-thirds the duration of an eighth note), but with full knowledge of their tuplet context. All notes in fact effectively belong to a tuplet of ratio 1:1, which is something the team decided to enforce to ensure that all editing operations and musical transformations work equally well on notes belonging to tuplets as those that don’t belong to tuplets.

Hot dig, Daniel! Loved your interview with the high school group and all your blogs. I’m 76 and hope to get the new program before I’m too old to learn it! 😉 I started with Finale before it was on the market, switched to Sibelius with which I’ve become disenchanted. Keep up the good work as development overseer and your success as marketer will be assured!

I thoroughly enjoy reading these updates. As a computer engineering student who has worked with many others, I know the difficultly and effort it takes to produce such well written, clear, and understandable pieces that don’t over simplify or get too technical or become too condescending. You seem to have struck a really nice balance, so bravo!!

As a music student, aspiring conductor, music notation junkie, I’ve been very very excited to see all this progress and the features, and I can’t wait to test drive it at some point. I’ve spent hours and years working with a competing product that rhymes with re-rally, and I’d really like to see how much this will blow that one out of the water. All the work with the fonts also appeals very much to my aesthetic typography senses!

As someone whose life of dominated by these two disparate fields, I can’t explain how much I enjoy reading these and how much I look forward to reading more!

And now I have to get back to deciding what I want to do with my life…

Looks like a bright future thanks to Daniel and friends.

You guys are great! I was impressed, browsing through Bravura, not only how thorough it is, but how much of an impact the availability of such a thorough, high-quality font will have on the industry. I can’t wait to get my hands on the software! In the mean time, I might have actual use for Bravura’s French lute notation. Keep up the good work, everyone!

Hope you set it up so it can have plugins downloaded directly as Sib 7 & 7.5 can.

If mentioning the use of SMuFL glyphs in LaTeX documents should ever refer to me, I’d have to say that “underway” is putting it highly optimistic 😉

Ah, and if it *doesn’t* refer to me, I’d be more than interested in what you mean else.

@Urs: I was contacted by another individual who is working on integrating Bravura glyphs into LuaTeX. I won’t reveal his name here as I don’t have permission to do that. He told me that he had already got the basic glyphs displaying after working out a way to convert the SMuFL JSON metadata into a suitable format to create entities based on the glyph names.

All your work sounds really good, up to the level of excellence Steinberg usually supplies.

For me there are a few things I dream of.

1] Being able to get an an articulation of a MIDI instrument in a natural way in score. So, you are writing for Cello using East West, you write in a phrase, the second not is staccato, the last you need to control vibrato in some natural, non mechanistic way – you right click… you see your options “Select Articulation”..you see a Vibrato field ready to mix.

2] When importing a MIDI score, it would be great to ‘parse’ the score with some wizard like functions, so you can add the powerful features of Steinberg scoring. So, after importing the score you get to go through note by note, applying relevant settings. “Parsing a Score”.

3] It would be good to have a phrase library, you could store custom arpeggios and past them in in various ways – e.g. reverse octave, revoiced, transposed… altered timings…

Can’t wait for the next release! Thanks for sharing!

Good, good progress as it seems!

Bravura looks very mature now.

Keep up the hard work!..

Hi Daniel,

while reading your Blog, i want to ask you if you are thinking about the possibility to scan Notes/Score (Like MusicScore X or Photoscore or Capella Scan) ?

Thanks for this great look into you Work, i am waiting for the new Application .. 🙂

Greets

Oliver

@Oliver: We don’t have the expertise in-house to develop music scanning/recognition software ourselves, but we intend to make it easy to import music from existing music scanning applications by way of e.g. MusicXML files.

Hi!

I’m following you blog with great interest. Do you have plans for more advanced rhythmic notation like the possibilities to have independent time signatures? That is, 3/4 i one group of instruments and 5/8 in another group!?

Or the possibility to have independent repeat signs?

I’m really looking forward to follow the development this exciting new program!

All best!

/Peter

@Peter: We are certainly hoping to support these kinds of notations in our new application.

Hmm, it’s very hard *not* to share a link here 😉

If only more people could know! 😉

Just in case it counts, a vote for Alan F. A Linux version would be a major plus!

Your careful re-examination of basic elements of music notation, such as fonts, is fascinating. But I am less than impressed with the musical example you provided of Bravura typeset with MuseScore. The noteheads appear to me heavy-handed — more rounded than eliptical on a slant. They lack the style and aesthetic beauty of the noteheads found in graceful French scores, such as Durand and Eschig. They resemble more the heavy font used by Finale (ugh!) rather than the gorgeous font used by Sibelius, or the classic font used in Score (which was modeled after Schott.) The justification in the example seems a little cramped — but that might be the fault of clunky MuseScore. In the design of a music font, aesthetic considerations and style are very significant. The overall visual appeal of a typeset page of music is just as important as its legibility.

MS

@Mark: Music fonts are of course a matter of taste. You can’t please all the people all the time. You might find that Bravura looks better to your eyes once you see it in our application, or you may never like it. Fortunately you will be able to use any music font you please with our application.

Mark,

I personally figure I’ll just use Bravura and the Sibelius for different uses. If this new software is as capable as it sounds like it will be, I’m sure it will be simple to choose what you want to do.

Daniel,

The aim of the Microsoft Ribbon in office was to bring power-user functionality to the masses. It effectively did that. In Sibelius, I did like the ribbon attempt in that it was better than the previous UI. But all I want to suggest is that you guys try to apply the same motto if possible. The easier advanced features are to use, and the easier it is for a novice to create a truly beautiful score, the better. 🙂

No need to reply. I realize I’m just venting random opinions on here. Aand I’ll keep doing it until I get my easier cc midi editing I want! 😉

-Sean

Yes a better and easier way to put CCs to the score is a big wish from me too

Daniel: Is there an idiots’ guide to using Bravura with Sibelius anywhere? I’m having no luck at all with it, and would really like to give it a go

@Andrew: You can’t use Bravura directly with Sibelius as it stands, but Sibelius user Andrew Moschou has made a Bravura derivative called Taneyev you can use in Sibelius. Check out his web site to download the font.

Aha! Thanks

Is it too early to ask if there will be a scripting language in your new software such as ManuScript? I always hoped that the scripting would be a bit more robust to allow one to also do algorithmic and generative processes within the notation program with the extreme being this:

http://opusmodus.com/

@nunativs: Our new application will include a Lua scripting engine.

Daniel,

I wondered if you ever considered optionally creating an editable audio file for each note rather than just sending midi info fed into a soft synth. This would considerably enhance the control of playback and also allow for more flexible use in electro-accoustic music, for instance. Perhaps computers are not quite up to this yet. What do you think?

Also, I wonder, do we really need a beat? Music, after all, is basically sounds in time so it might be possible to simply store the position and duration of a note relative only to the time from beginning of a score. Different tempos could then be superimposed on it with different notational results. This would allow for conversion to and from proportional notation, for instance.

@Kenneth: We made the choice to have the musical brain think in beats early on. We felt that working purely in time (ticks, or some other similar unit) would have some disadvantages compared to working in beats, since we can use fractions to create complex things like tuplets with no loss of precision, and with a semantic connection between that low-level representation and the desired notation. At this stage we’re certainly not going to revisit this decision!

Regarding your idea of creating a per-note audio file, that seems a bit like overkill to me. Does anybody really have time to edit thousands of individual audio files in order to get the perfect rendition? Surely it would be cheaper and easier to print the score out on paper and get some real musicians to play it…?!

I understand that it is too late to change the basic structure of the software and as far as I can see the way you have done it will work perfectly for almost all the music likely to be written on it. Not many composers use multiple tempi etc.

I’ve thought a bit more about the per-note audio file idea and it might be possible and effective as an option for some notes. It would be perfectly possible to offer the option of creating one for a group of notes using a softsynth and then editing it, replacing it with something else or reseting it back to normal playback mode. Perhaps this is confusing the sound generation of a softsynth with that of midi playback and control but I wonder if this is just a tradition that has formed for historical reasons, i.e. the fact that midi originally controlled hardware synths and the software ones were modeled on these. Perhaps this is worth a rethink?

please consider a linux version. i’m so tired of having to run junk proprietary operating systems (osx / windows) just for notation capabilities.

I hope you’ll take this as constructive criticism. We’re all busy but please read to the end.

At the risk of sounding snippy, is that you’re ‘unveiling’ this thing in exactly the opposite manner that interests people like -me- It’s such a tease that myself and a few of my peers who were initially interested–have mentally checked out. What -we- care about is the other end… how it will integrate with Cubase.

No matter how superior this thing is in terms of the score engine, if it doesn’t significantly improve that link between DAW it’s kind of a ho hum. -The- biggest issue I’ve had for YEARS with scoring is that the whole ‘integration’ issue is so painful. All my friends who make more money than I do simply hire another human to convert their MIDI to ‘real’ notation. And then with every change needed after every rehearsal or meeting with stakeholders I just -cringe- because I know it’s going to be painful.

I’m only writing this because I’m advocating for -integration-. It should be an order of magnitude easier/more reliable taking the MIDI from Cubase and converting it into usable notation.

Making a more beautiful/readable notator is just not that interesting to working stiffs like me.

I am deeply disappointed that this will be a -separate- app and -not- a DEEPLY integrated part of Cubase. ‘Score’… for all its faults, is a noble concept. I -hope- that I am 100% wrong about my concerns. Regardless, I wish you all success. You’re obviously deeply passionate about this stuff and I salute that.

Regards,

@JC: I believe we have a good understanding of the pain points in the kind of workflow you’re following. DAW-to-score and score-to-DAW are both important workflows that we intend to address with our application. We have some specific plans about ways to reduce the pain of those workflows, but I can’t share them at this point. As we get closer to release I’ll perhaps be able to provide more details.

The task of transcribing MIDI music into a legible, printable score and parts is daunting and extremely complex. To do it exactly right, artificial intelligence is required. This has been discussed at length, but is probably worth repeating. Music notation is a very imperfect tool for recording works of music. It is incredibly imprecise for anything beyond extremely simple, tonal and straight music (at the time of its invention and standardisation, such were the qualities of music). In order for it to be simple and legible, it relies of a large heaps of assumptions, requiring plenty of experience of musicians. When we notate a piece of music, we omit or simplify large parts of its properties, because we rely on musicians who will, knowing the style, make up for those in their performance. We do so in order to make the work much more legible. While there is no doubt that it would be possible to notate much more detail using conventional musical notation, this is not done, as it would make the music profoundly illegible (if accurately transcribed).

This is why it is impossible to seamlessly integrate DAW and engraving in such a way as to enable most optimal (note how I didn’t say accurate) transcription of recorded MIDI musical data.

Example: music notation allows us to use various symbols for articularion: accent, staccato, marcato, tenuto, etc. What would be the MIDI-recorded equivalent for these? How does one properly analyse / quantify such performance and decide which to use?

The expressional freedom we give ourselves as musicians while recording our music is critical in order to deliver an inspired performance. We make minute adjustments to tempo, extending or shortening the length of individual notes ever-so-slightly, playing those chords in slight arpeggios instead of together, slurring or separating notes in a melodic line, and none of these adjustments are easily quantifiable, measurable or consistent. It is an extremely difficult task to develop a set of algorithms that would analyse recorded MIDI, taking into consideration its abstract qualities, such as style, tempo, mood, character, etc, and then transcribe it into notation that will be legible, complete and reasonably accurate. In my experience, I always had to properly quantise my MIDI recording in order to get notation anywhere near usable. I believe the seamless fusion of DAW and notation is far away in the distance of computing, as it must reconcile two properties that are essentially as far away from each other as possible: the intuitive and abstract properties of music, and exact and precise data of MIDI.

I have’nt tried it in detail, so I cannot judge about its gain when working with more sophisticated stuff…

But, as far as I know (please, correct me if I’m wrong!), ScoreCloud/ScoreCleaner is the only scoring app at the moment, that makes use of artificial intelligence to convert audio to midi/musicxml…

Predrag I hear your pain.

I have thought about these issues for years and have brought myself up to a high standard on piano for just this reason. However this does not make me a violinist and the way the bow is held and how it comes down on the string is a lifetime’s study, as is the art of sticking a bit of bamboo reed in a mouth. Yet, I see a couple of obstacles that could be surmounted.

Tempo – OK some click tracks are necessary, but in a human performance a stunning performance would be way off exact – for expressive reasons. Its not just we tolerate inaccuracy with our ears, its the way tempi are crafted per PHRASE, each phrase being a collection of two or three different approaches to a string reed bang or pluck. Each instrumentalist has his own tempo track and he plays with it in creative ways, he might give a curving increase of tempo for that eight note scalar run to the octave, where he hits that note loud and with extra attack…. and so forth. I think we could build a way to follow timing in this way.

Secondly articulation and articulation crafting. Each note is a universe in itself. In a real instrument you get to bend and sqeak, with a suite of violin samples you do not, but you could have a feature that enabled you to a] choose the articulation, b] apply artistic effects like accent the attack, shorten the decay. Expression maps are part of the answer, but they are very counterintuitive and techy.

Imagine going into score selecting a phrase on the viola line, you right click, you get to see this pick of a viola and next to it are all the custom settings for this note, you can choose your basic articulation (sample), then you get to play with it in ways that are suitable for that kind of instrument – a stringed instrument. Because its a stringed instrument you get to apply string noise, you get to customise the length and attack of the staccato, you get to play in the vibrato window. Each instrument having its own interface within score. Each note a universe

I am sure that there are people out there who create their music in a DAW, then have it transferred / transcribed into an engraving tool for printing, my completely uneducated guess is that the core group of users of engraving tools, such as Finale, Sibelius and all others this project is hoping to replace, rarely if ever uses a DAW. It is nice to hear that the team has specific plans for integration, but I believe most of us would love to focus energy on solving frustrating problems regarding engraving and printing our scores, rather than integrating with MIDI-based production tools.

With every new blog entry, I’ve been salivating more and more over the details regarding solutions to some of the issues that persist in Finale and Sibelius (and probably others, too), such as fixing the tied notes (i.e. when I move one of them, the other stays; or when I cancel accidental on the first one, it puts it on the second, or when I add staccato to all notes in a bar, the tied notes get them as well…). There are so many announcements in these blogs that give me plenty of reason for hope. It is exciting to hold our collective breaths and wait for the next announcement, bringing us one step closer to the dream tool.

Is that a screen shot of the new app in the lower right of the picture?

@Bill: No, I’m afraid not. That’s a PDF, I think. (Richard, our lead tester, spends a lot of his time creating test data in MusicXML, which means a lot of time transcribing music into Sibelius or Finale and then exporting MusicXML files so that they can be opened by our test harness.)

I just realized that I’d love to read a guest post by your lead tester.

Wow, this is an addicting game 😛

I finally managed to get two breve’s, if you join them they become a … ‘undefined’ …

Bummer.

It has been nearly two years since the announcement of the closure of the UK office of Sibelius. Like many, I am looking forward to the new notation software program with bated breath. Can’t get here soon enough…

Hi again.

After an hour or so of fighting with Sibelius trying get a new score configured I find myself desperate, once again, for your new program! I’m afraid you’re no longer permitted to sleep, eat, socialize, etc…. nothing but coding! 🙂

I do have one request, though: Could you please make it simple (and powerful) to set up mappings between articulations, performance techniques, and VST/AU instrument sounds/settings? This whole closed(ish), proprietary thing (are you listening Sibelius, Notion?) is just a nightmare to deal with, and way too time consuming…

Also, it would be really great if the user could decide which plug-in each articulation would be assigned to. In my case, I’ve been playing around with the recent Blakus Cello, which is truly amazing. But since it doesn’t have harmonics (and other articulations I use often), I’d like to assign certain things to my VSL solo cello as well. Sibelius can be persuaded to do this, sometimes, and with a certain amount of chanting, naked moonlit dancing, and general mysticism… But it’s by no means simple (which it could/should be). The kinds of virtual instruments being created these days, e.g., from the likes of Embertone and Sample Modeling—to say nothing of the truly astounding “Serenade III” string model for NI Reaktor by Chet Singer—offer tremendous promise for computer-based composition. But the notation programs that are currently on offer lack the flexibility to get the best of all worlds. You guys can, without doubt, solve this problem!

Thanks, and please get us another update soon.

J.

Today, being Independence Day in America, I can’t help but recall two years ago when Steinberg declared independence day for Team Daniel and stepped up to invest in this very talented brain trust– with the goal of creating a completely new and fresh approach to music scoring and notational software.

The levels of expectation are high and we are all anxious to see the reveal. At times, I feel like an impatient adolescent waiting to open gifts on Christmas. However, trying to maintain some level of rational behavior, I believe Steinberg and Team Daniel will produce a product of the highest calibre for their maiden voyage.

Knowing that this new notation program stands on the shoulders of all previous notation products, I am confident that Steinberg and Team Daniel have completely thought through their technological approach to the extent that as hard as it is to relax and be calm, we must hold on until the appropriate time…

Thanks to Steinberg. Thanks to Team Daniel!

-dm

Hello again,

Is this software coming with an orchestral library ? Will Steinberg developp an orchestral Library to works with this software ? Will all the articualtions or effects match with Library/software combination ?

Thank You

@Gyorgy: We haven’t fully figured out what kind of sample library content will ship with our application. Regardless of what ships with the product, it’s our goal to support a wide range of third-party virtual instruments as fully as possible, using Steinberg’s VST Expression Maps system.

Please work together with VSL! It’s simply the best orchestral library.

As long as all the strange little affectations of the VSL library are taken into account (for example, the ridiculously short sustain time on many of the legato sample—e.g., the piccolo perf-leg). If the program could automatically switch to a sustain sample after the legato transition, then great. Otherwise, I’m no longer convinced, I’m sorry to say (i.e., as a long-time VSL user, heavily invested in the library). Sample Modeling is pretty amazing, and Embertone’s violin and viola are kind of astounding too. Honestly, I feel like VSL has fallen behind in some ways…

hmm… just noticed said “viola”, but Embertone doesn’t have a viola (yet). Apparently they’re working on one. I meant cello; the “Blakus” cello pretty amazing.

Not to my taste Michael, they omitted some basic things like natural and artificial harmonics on orchestral strings, you have just one of kind ” artificial” articulations, in another competiter, yes the samples aren’t as nice as VSL but when we write music , it is excactelly what a real performer can do.

Writing music is limetless, so we need a sample library to respond perfectly of what we have in mind.And Yes , agree with jamesbmaxwell , a sample modeled library will knoc out other competiters.

I am sorry to express that I am disappointed. Looks very chubby and extremely “classical”. This is not just an “aesthetic” superficial judgment. Looks very poorly suited for contemporary-complex notation in which lines and graphic objects needs to melt with “solfeggio” notation. Seems more intended for neo-classical notation than that of modern music. Am i wrong?

@Alessandro: You’re welcome to your opinion, of course. The font is certainly classical in appearance: it is based on the punches used by one of the major German music publishers up until the middle point of the last century. It is intended to provide a bold, readable appearance, and is a reaction against the spidery and insubstantial music produced by most computer engraving programs. It is intended to work well both when printed on paper and when viewed on a screen, as it is going to be the case that more and more music notation is consumed directly from a screen rather than paper in the coming years, and those screens will have increasing pixel density, allowing better and finer reproduction of the detail of individual symbols.

But you don’t have to use Bravura if you don’t like it, and of course other music fonts may be better suited to different kinds of music, just as some text fonts are better suited to some kinds of publications than others. My hope is that the SMuFL standard will make it easier and more worthwhile for font designers to produce more music fonts, because they will have the certainty both that a consistent set of guidelines for metrics and registration exist, and that users of multiple applications will be able to make use of the fonts, thus reaching as large a potential user base as possible.

Will the software be intended to customise music font (Like the two competitors does…) for example using Boulez font?

@Alessandro: You will be able to use any third party music font. It will be easiest and smoothest if the font you choose to use is SMuFL-compliant (and I hope there will be a variety of SMuFL-compliant fonts in addition to Bravura available in future), but you will be able to use any font, provided you’re willing to put in the work to manually map the glyphs in the font in the appropriate places in the application.

It’s only been two months. Looking forward to your next update…

Great work so far, I’m really looking forward to see the finished product. But I also want to add my voice to those who ask you to consider supporting Linux. My Linux system is just perfect as a music workstation. Recently the first version of Bitwig Studio has been released, which is a capable daw and contender to Ableton Live that runs natively on Windows, Mac OS X and Linux. The only thing missing is a professional notation software. The only reason why there aren’t more linux users is the hesitance of commercial software developers to port professional software to linux, while there are a lot of linux users already who would be glad to buy the software licenses just to avoid dual booting or installing windows in a virtual box.

The brain like architecture, satisfying several constraints at the same time (if this is what it is doing) is interesting, and is a trend (Blue Brain project, IBM’s synapse chips)

Very theoretically I’m thinking about music analysis of counterpoint, satisfying melody and harmony at once.. Maybe biological neurology may inspire some algorithms for the binding of the parallel processes.

Would you recommend readings (books or research papers) that inspire your software architecture ?

Great font! Considering contemporary scores we just hope that noteheads of chromatic mini-clusters (G G# A) won’t overlap. 🙂

Bravuras traditional 19th century look will be very much appreciated by (at least german) musicians – especially when playing “complex” contemporary scores.

aaaagh… okay, I’m having another Sibelius-induced misery day today… Any updates on your progress, Daniel et al.?

Looking forward to hearing about recent progress on the notation front.

As a user of Sibelius since Acorn Archimedes I derive great delight and anticipation from your new work with Steinberg, incorporating the vital legacy of music notation from several traditions, developing a new flexible workflow. This blog reflects that work and your enthusiasm for solutions, thank you.

When is your product sell date? approximate.

May I remind you of those last three words of your posting? 🙂